谷粒商城-高级-73 -商城业务-分布式事务-Seata 控制分布式事务

一、Seata概念

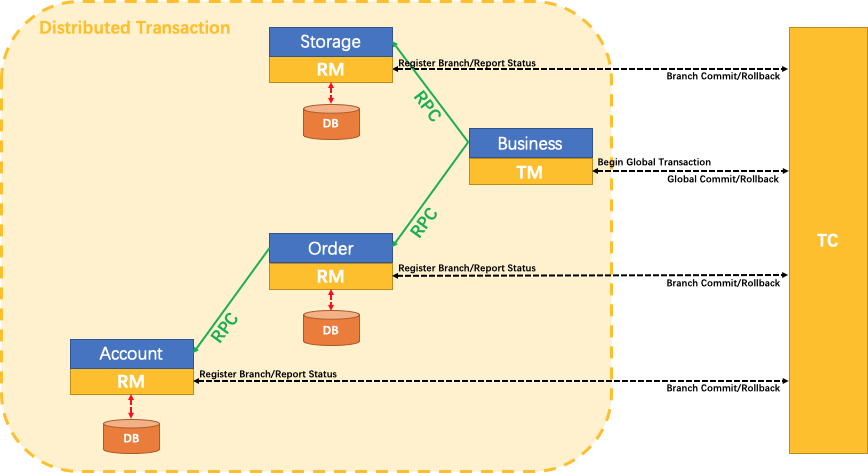

Seata 是一款开源的分布式事务解决方案,致力于提供高性能和简单易用的分布式事务服务。Seata 将为用户提供了 AT、TCC、SAGA 和 XA 事务模式,为用户打造一站式的分布式解决方案。

Seata术语

- TC:事务协调者。维护全局和分支事务的状态,驱动全局事务提交或回滚。

- TM:事务管理器。定义全局事务的范围:开始全局事务、提交或回滚全局事务

- RM:管理分支事务处理的资源,与TC交谈以注册分支事务和报告分支事务的状态,并驱动分支事务提交或回滚。

RPC(Remote Procedure Call)远程过程调用,简单的理解是一个节点请求另一个节点提供的服务。

我们只需要使用一个 @GlobalTransactional 注解在业务方法上:

@GlobalTransactional

public void purchase(String userId, String commodityCode, int orderCount) {

......

}二、Seata使用

1、创建 UNDO_LOG 表

SEATA AT 模式需要 UNDO_LOG 表

-- 注意此处0.3.0+ 增加唯一索引 ux_undo_log

CREATE TABLE `undo_log` (

`id` bigint(20) NOT NULL AUTO_INCREMENT,

`branch_id` bigint(20) NOT NULL,

`xid` varchar(100) NOT NULL,

`context` varchar(128) NOT NULL,

`rollback_info` longblob NOT NULL,

`log_status` int(11) NOT NULL,

`log_created` datetime NOT NULL,

`log_modified` datetime NOT NULL,

`ext` varchar(100) DEFAULT NULL,

PRIMARY KEY (`id`),

UNIQUE KEY `ux_undo_log` (`xid`,`branch_id`)

) ENGINE=InnoDB AUTO_INCREMENT=1 DEFAULT CHARSET=utf8;如果每一个微服务都想参与到全局事务中,则每个微服务都需要创建一个回滚日志表 UNDO_LOG。

给我们项目所涉及的微服务数据库都添加undo_log表:gulimall-oms,gulimall-pms, gulimall-ums,gulimall-wms 。

2、安装事务协调器

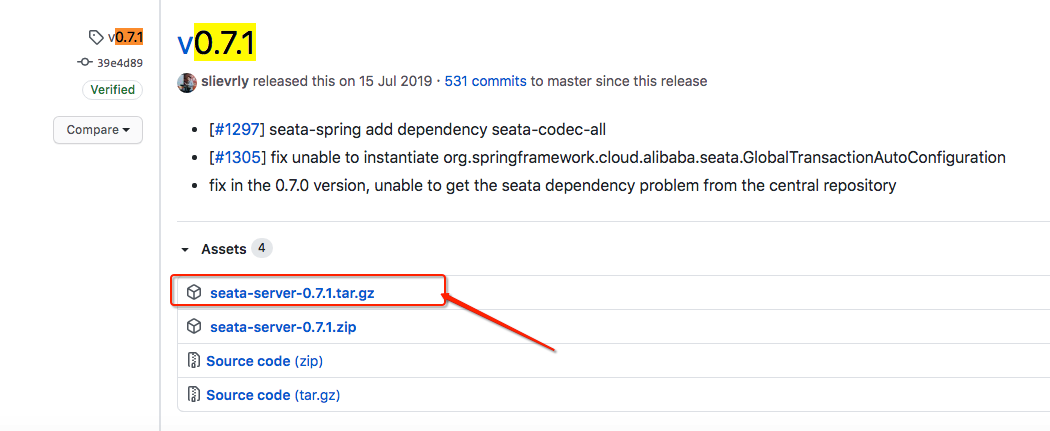

从 https://github.com/seata/seata/releases, 下载服务器软件包,将其解压缩。

Usage: sh seata-server.sh(for linux and mac) or cmd seata-server.bat(for windows) [options]

Options:

--host, -h

The host to bind.

Default: 0.0.0.0

--port, -p

The port to listen.

Default: 8091

--storeMode, -m

log store mode : file、db

Default: file

--helpe.g.

sh seata-server.sh -p 8091 -h 127.0.0.1 -m file3、整合

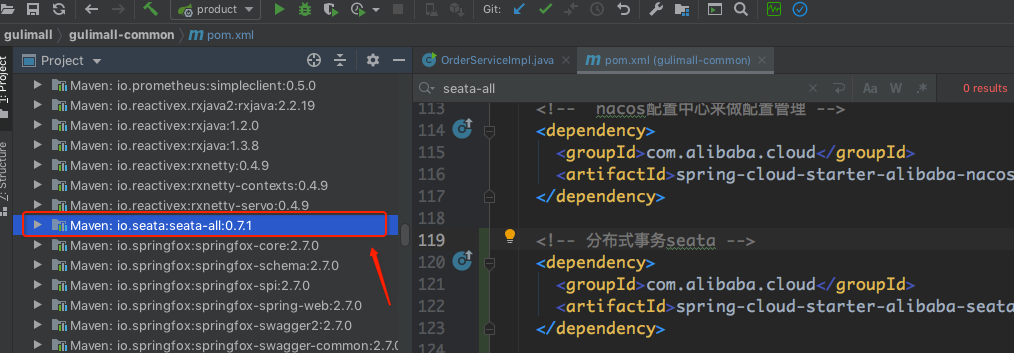

在 gulimall-common 服务导入seata依赖:gulimall-common/pom.xml

<!-- 分布式事务seata -->

<dependency>

<groupId>com.alibaba.cloud</groupId>

<artifactId>spring-cloud-starter-alibaba-seata</artifactId>

</dependency>

可以看到导入的Seata版本为 seata-all-0.7.1 ,所以,seata服务版本也必须是 0.7.1。

下载 seata-server 0.7.1版本到本地,然后解压缩,将解压缩包放到我们开发目录下:

cd /Users/kaiyiwang/javaweb/guli/develop/seata-server-0.7.1/bin注册中心配置:/seata-server-0.7.1/conf/registry.conf:修改 registry type=nacos

registry {

# file 、nacos 、eureka、redis、zk、consul、etcd3、sofa 指定注册中心

type = "nacos"

# 如果类型为nacos,则需要配置nacos的服务addr

nacos {

serverAddr = "localhost:8848"

namespace = "public"

cluster = "default"

}

eureka {

serviceUrl = "http://localhost:1001/eureka"

application = "default"

weight = "1"

}

redis {

serverAddr = "localhost:6379"

db = "0"

}

zk {

cluster = "default"

serverAddr = "127.0.0.1:2181"

session.timeout = 6000

connect.timeout = 2000

}

consul {

cluster = "default"

serverAddr = "127.0.0.1:8500"

}

etcd3 {

cluster = "default"

serverAddr = "http://localhost:2379"

}

sofa {

serverAddr = "127.0.0.1:9603"

application = "default"

region = "DEFAULT_ZONE"

datacenter = "DefaultDataCenter"

cluster = "default"

group = "SEATA_GROUP"

addressWaitTime = "3000"

}

file {

name = "file.conf"

}

}

config {

# file、nacos 、apollo、zk、consul、etcd3 Seata的配置都在哪里,默认是在该文件 file.conf

type = "file"

nacos {

serverAddr = "localhost"

namespace = "public"

cluster = "default"

}

consul {

serverAddr = "127.0.0.1:8500"

}

apollo {

app.id = "seata-server"

apollo.meta = "http://192.168.1.204:8801"

}

zk {

serverAddr = "127.0.0.1:2181"

session.timeout = 6000

connect.timeout = 2000

}

etcd3 {

serverAddr = "http://localhost:2379"

}

file {

name = "file.conf"

}

}

Seata服务器配置:/seata-server-0.7.1/conf/file.conf

transport {

# tcp udt unix-domain-socket

type = "TCP"

#NIO NATIVE

server = "NIO"

#enable heartbeat

heartbeat = true

#thread factory for netty

thread-factory {

boss-thread-prefix = "NettyBoss"

worker-thread-prefix = "NettyServerNIOWorker"

server-executor-thread-prefix = "NettyServerBizHandler"

share-boss-worker = false

client-selector-thread-prefix = "NettyClientSelector"

client-selector-thread-size = 1

client-worker-thread-prefix = "NettyClientWorkerThread"

# netty boss thread size,will not be used for UDT

boss-thread-size = 1

#auto default pin or 8

worker-thread-size = 8

}

shutdown {

# when destroy server, wait seconds

wait = 3

}

serialization = "seata"

compressor = "none"

}

service {

#vgroup->rgroup

vgroup_mapping.my_test_tx_group = "default"

#only support single node

default.grouplist = "127.0.0.1:8091"

#degrade current not support

enableDegrade = false

#disable

disable = false

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

max.commit.retry.timeout = "-1"

max.rollback.retry.timeout = "-1"

}

client {

async.commit.buffer.limit = 10000

lock {

retry.internal = 10

retry.times = 30

}

report.retry.count = 5

}

## transaction log store,事务日志存储在哪里

store {

## store mode: file、db

mode = "file"

## file store

file {

dir = "sessionStore"

# branch session size , if exceeded first try compress lockkey, still exceeded throws exceptions

max-branch-session-size = 16384

# globe session size , if exceeded throws exceptions

max-global-session-size = 512

# file buffer size , if exceeded allocate new buffer

file-write-buffer-cache-size = 16384

# when recover batch read size

session.reload.read_size = 100

# async, sync

flush-disk-mode = async

}

## database store

db {

## the implement of javax.sql.DataSource, such as DruidDataSource(druid)/BasicDataSource(dbcp) etc.

datasource = "dbcp"

## mysql/oracle/h2/oceanbase etc.

db-type = "mysql"

url = "jdbc:mysql://127.0.0.1:3306/seata"

user = "mysql"

password = "mysql"

min-conn = 1

max-conn = 3

global.table = "global_table"

branch.table = "branch_table"

lock-table = "lock_table"

query-limit = 100

}

}

lock {

## the lock store mode: local、remote

mode = "remote"

local {

## store locks in user's database

}

remote {

## store locks in the seata's server

}

}

recovery {

committing-retry-delay = 30

asyn-committing-retry-delay = 30

rollbacking-retry-delay = 30

timeout-retry-delay = 30

}

transaction {

undo.data.validation = true

undo.log.serialization = "jackson"

}

## metrics settings

metrics {

enabled = false

registry-type = "compact"

# multi exporters use comma divided

exporter-list = "prometheus"

exporter-prometheus-port = 9898

}启动Seata服务:

cd /Users/kaiyiwang/javaweb/guli/develop/seata-server-0.7.1/bin

# sh seata-server.sh -p 8091 -h 127.0.0.1 -m file

chmod +x seata-server.sh

sh seata-server.sh -p 8091 -h 127.0.0.1打印:

2020-09-15 17:31:35.889 INFO [main]io.seata.core.rpc.netty.AbstractRpcRemotingServer.start:179 -Server started ...

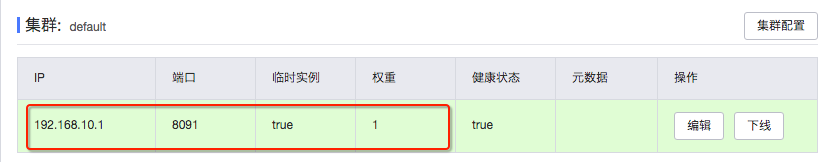

2020-09-15 17:31:35.903 INFO [main]io.seata.common.loader.EnhancedServiceLoader.loadFile:237 -load RegistryProvider[Nacos] extension by class[io.seata.discovery.registry.nacos.NacosRegistryProvider]然后再进入到Nacos中心,可以看到Seata服务已经成功注册到Nacos服务中心了。

4、数据源代理

所有想要用到分布式事务的微服务使用 seata DataSourceProxy 代理自己的数据源。

创建Seata数据源配置文件:gulimall-order/xxx/order/config/MySeataConfig.java

package com.atguigu.gulimall.order.config;

import com.zaxxer.hikari.HikariDataSource;

import io.seata.rm.datasource.DataSourceProxy;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.autoconfigure.jdbc.DataSourceProperties;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.util.StringUtils;

import javax.sql.DataSource;

/**

* Seata数据源代理配置

*

* @doc:

* https://github.com/seata/seata-samples/tree/master/springcloud-jpa-seata

*

* @author: kaiyi

* @create: 2020-09-15 17:44

*/

@Configuration

public class MySeataConfig {

@Autowired

DataSourceProperties dataSourceProperties;

@Bean

public DataSource dataSource(DataSourceProperties dataSourceProperties) {

HikariDataSource dataSource = dataSourceProperties.initializeDataSourceBuilder().type(HikariDataSource.class).build();

if (StringUtils.hasText(dataSourceProperties.getName())) {

dataSource.setPoolName(dataSourceProperties.getName());

}

return new DataSourceProxy(dataSource);

}

}

然后将该配置文件放到需要使用seata服务的配置目录下。

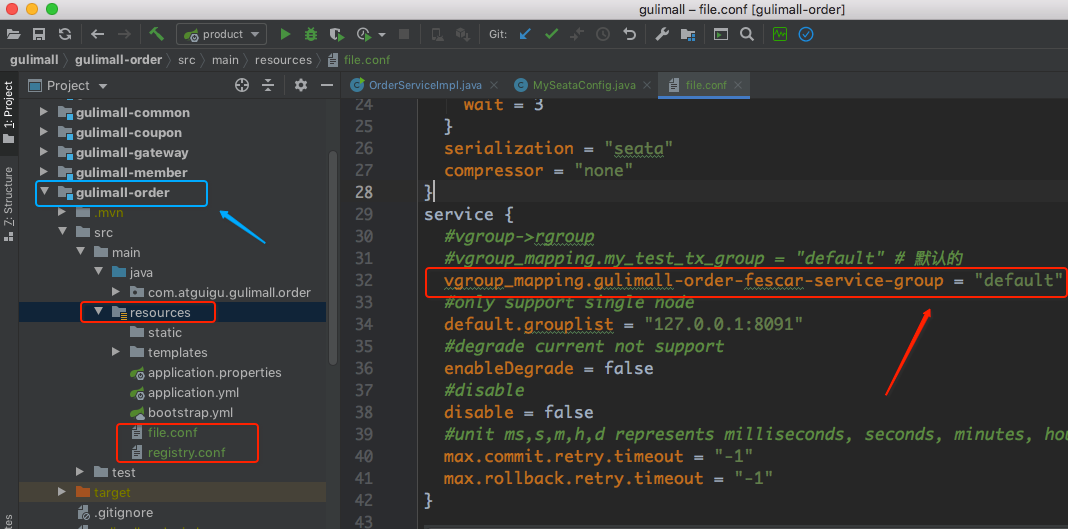

5、微服务导入配置文件

每个微服务,都必须导入 registry.conf , file.conf,并且修改file.conf配置应用名为我们微服务名 vgroup_mapping.{application.name}-fescar-server-group = "default" ,否则会找不到。

file.conf

transport {

# tcp udt unix-domain-socket

type = "TCP"

#NIO NATIVE

server = "NIO"

#enable heartbeat

heartbeat = true

#thread factory for netty

thread-factory {

boss-thread-prefix = "NettyBoss"

worker-thread-prefix = "NettyServerNIOWorker"

server-executor-thread-prefix = "NettyServerBizHandler"

share-boss-worker = false

client-selector-thread-prefix = "NettyClientSelector"

client-selector-thread-size = 1

client-worker-thread-prefix = "NettyClientWorkerThread"

# netty boss thread size,will not be used for UDT

boss-thread-size = 1

#auto default pin or 8

worker-thread-size = 8

}

shutdown {

# when destroy server, wait seconds

wait = 3

}

serialization = "seata"

compressor = "none"

}

service {

#vgroup->rgroup

#vgroup_mapping.my_test_tx_group = "default" # 默认的

vgroup_mapping.gulimall-order-fescar-service-group = "default"

#only support single node

default.grouplist = "127.0.0.1:8091"

#degrade current not support

enableDegrade = false

#disable

disable = false

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

max.commit.retry.timeout = "-1"

max.rollback.retry.timeout = "-1"

}

client {

async.commit.buffer.limit = 10000

lock {

retry.internal = 10

retry.times = 30

}

report.retry.count = 5

}

## transaction log store

store {

## store mode: file、db

mode = "file"

## file store

file {

dir = "sessionStore"

# branch session size , if exceeded first try compress lockkey, still exceeded throws exceptions

max-branch-session-size = 16384

# globe session size , if exceeded throws exceptions

max-global-session-size = 512

# file buffer size , if exceeded allocate new buffer

file-write-buffer-cache-size = 16384

# when recover batch read size

session.reload.read_size = 100

# async, sync

flush-disk-mode = async

}

## database store

db {

## the implement of javax.sql.DataSource, such as DruidDataSource(druid)/BasicDataSource(dbcp) etc.

datasource = "dbcp"

## mysql/oracle/h2/oceanbase etc.

db-type = "mysql"

url = "jdbc:mysql://127.0.0.1:3306/seata"

user = "mysql"

password = "mysql"

min-conn = 1

max-conn = 3

global.table = "global_table"

branch.table = "branch_table"

lock-table = "lock_table"

query-limit = 100

}

}

lock {

## the lock store mode: local、remote

mode = "remote"

local {

## store locks in user's database

}

remote {

## store locks in the seata's server

}

}

recovery {

committing-retry-delay = 30

asyn-committing-retry-delay = 30

rollbacking-retry-delay = 30

timeout-retry-delay = 30

}

transaction {

undo.data.validation = true

undo.log.serialization = "jackson"

}

## metrics settings

metrics {

enabled = false

registry-type = "compact"

# multi exporters use comma divided

exporter-list = "prometheus"

exporter-prometheus-port = 9898

}其他微服务也需要引入这两个文件,其他微服务引入的时候,要记得file.conf文件修改 application.name名字,如仓库服务:

#仓储服务

vgroup_mapping.gulimall-ware-fescar-service-group = "default"6、启动测试分布式事务

- 给分布式大事务的入口标注

@GlobalTransactional - 每一个远程的小事务用

@Trabsactional

项目代码:gulimall-order/xxx/order/service/impl/OrderServiceImpl.java

/**

* 提交订单

* @param vo

* @return

*/

// @Transactional(isolation = Isolation.READ_COMMITTED) 设置事务的隔离级别

// @Transactional(propagation = Propagation.REQUIRED) 设置事务的传播级别

// 开启Seata全局性事务

@GlobalTransactional(rollbackFor = Exception.class)

@Transactional

@Override

public SubmitOrderResponseVo submitOrder(OrderSubmitVo vo) {

confirmVoThreadLocal.set(vo);

SubmitOrderResponseVo responseVo = new SubmitOrderResponseVo();

//去创建、下订单、验令牌、验价格、锁定库存...

//获取当前用户登录的信息

MemberResponseVo memberResponseVo = LoginUserInterceptor.loginUser.get();

responseVo.setCode(0);

//1、验证令牌是否合法【令牌的对比和删除必须保证原子性】

String script = "if redis.call('get', KEYS[1]) == ARGV[1] then return redis.call('del', KEYS[1]) else return 0 end";

String orderToken = vo.getOrderToken();

//通过lua脚本原子验证令牌和删除令牌

Long result = redisTemplate.execute(new DefaultRedisScript<Long>(script, Long.class),

Arrays.asList(OrderConstant.USER_ORDER_TOKEN_PREFIX + memberResponseVo.getId()),

orderToken);

if (result == 0L) {

//令牌验证失败

responseVo.setCode(1);

return responseVo;

} else {

//令牌验证成功

//1、创建订单、订单项等信息

OrderCreateTo order = createOrder();

//2、验证价格

BigDecimal payAmount = order.getOrder().getPayAmount();

BigDecimal payPrice = vo.getPayPrice();

if (Math.abs(payAmount.subtract(payPrice).doubleValue()) < 0.01) {

//金额对比

//TODO 3、保存订单

saveOrder(order);

//4、库存锁定,只要有异常,回滚订单数据

//订单号、所有订单项信息(skuId,skuNum,skuName)

WareSkuLockVo lockVo = new WareSkuLockVo();

lockVo.setOrderSn(order.getOrder().getOrderSn());

//获取出要锁定的商品数据信息

List<OrderItemVo> orderItemVos = order.getOrderItems().stream().map((item) -> {

OrderItemVo orderItemVo = new OrderItemVo();

orderItemVo.setSkuId(item.getSkuId());

orderItemVo.setCount(item.getSkuQuantity());

orderItemVo.setTitle(item.getSkuName());

return orderItemVo;

}).collect(Collectors.toList());

lockVo.setLocks(orderItemVos);

//TODO 调用远程锁定库存的方法

//出现的问题:扣减库存成功了,但是由于网络原因超时,出现异常,导致订单事务回滚,库存事务不回滚(解决方案:seata)

//为了保证高并发,不推荐使用seata,因为是加锁,并行化,提升不了效率,可以发消息给库存服务

R r = wmsFeignService.orderLockStock(lockVo);

if (r.getCode() == 0) {

//锁定成功

responseVo.setOrder(order.getOrder());

// int i = 10/0;

//删除购物车里的数据

redisTemplate.delete(CartConstant.CART_PREFIX + memberResponseVo.getId());

return responseVo;

} else {

//锁定失败

String msg = (String) r.get("msg");

throw new NoStockException(msg);

// responseVo.setCode(3);

// return responseVo;

}

} else {

responseVo.setCode(2);

return responseVo;

}

}

}锁定库存业务:gulimall-ware/xxx/ware/service/impl/WareSkuServiceImpl.java

/**

* 为某个订单锁定库存

* @param vo

* @return

*/

@Transactional(rollbackFor = Exception.class)

@Override

public boolean orderLockStock(WareSkuLockVo vo) {

//1、按照下单的收货地址,找到一个就近仓库,锁定库存

//2、找到每个商品在哪个仓库都有库存

List<OrderItemVo> locks = vo.getLocks();

List<SkuWareHasStock> collect = locks.stream().map((item) -> {

SkuWareHasStock stock = new SkuWareHasStock();

Long skuId = item.getSkuId();

stock.setSkuId(skuId);

stock.setNum(item.getCount());

//查询这个商品在哪个仓库有库存

List<Long> wareIdList = wareSkuDao.listWareIdHasSkuStock(skuId);

stock.setWareId(wareIdList);

return stock;

}).collect(Collectors.toList());

//2、锁定库存

for (SkuWareHasStock hasStock : collect) {

boolean skuStocked = false;

Long skuId = hasStock.getSkuId();

List<Long> wareIds = hasStock.getWareId();

if (org.springframework.util.StringUtils.isEmpty(wareIds)) {

//没有任何仓库有这个商品的库存

throw new NoStockException(skuId);

}

for (Long wareId : wareIds) {

//锁定成功就返回1,失败就返回0

Long count = wareSkuDao.lockSkuStock(skuId,wareId,hasStock.getNum());

if (count == 1) {

skuStocked = true;

break;

} else {

//当前仓库锁失败,重试下一个仓库

}

}

if (skuStocked == false) {

//当前商品所有仓库都没有锁住

throw new NoStockException(skuId);

}

}

//3、肯定全部都是锁定成功的

return true;

}

@Data

class SkuWareHasStock {

private Long skuId;

private Integer num;

private List<Long> wareId;

}调试:

问题:

在引入seata后,启动服务报io.seata.common.exception.FrameworkException: can not register RM,err:can not connect to services-server的错误。

解决:

参考:(1)https://github.com/seata/seata/issues/2522 (2)https://seata.io/zh-cn/docs/overview/faq.html

https://github.com/seata/seata-samples/tree/master/springcloud-jpa-seata

file.conf 的 service.vgroup_mapping 配置必须和spring.application.name一致

在 org.springframework.cloud:spring-cloud-starter-alibaba-seata的org.springframework.cloud.alibaba.seata.GlobalTransactionAutoConfiguration类中,默认会使用 ${spring.application.name}-fescar-service-group作为服务名注册到 Seata Server上,如果和file.conf中的配置不一致,会提示 no available server to connect错误

也可以通过配置 spring.cloud.alibaba.seata.tx-service-group修改后缀,但是必须和file.conf中的配置保持一致

小结:

使用Seata之后,我们可以测试看到远程调用增加的库存在执行后边积分失败时库存也回滚了,所以,Seata还是非常强大的。

为者常成,行者常至

自由转载-非商用-非衍生-保持署名(创意共享3.0许可证)